Metro Tunnel Melbourne

Birthing a Digital Command Centre

Integrating Data, Decisions and Operations

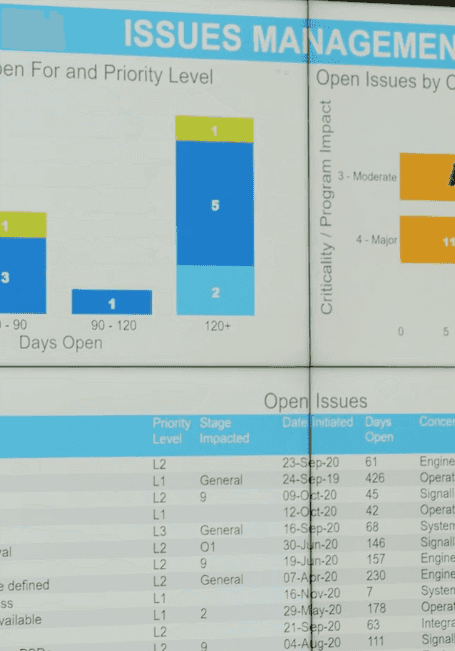

Working with alliance partners commissioned to design, construct and deliver Metro Tunnel Melbourne works CPB Contractors faced an information asymmetry issue. Data silos existed across each organisational unit meaning extremely useful and impactful information wasn't able to be reported to other units, management and stakeholders.

Solution: Atlasopen worked on the Digital Command Centre a concept resulting in the extraction of data from siloed source systems into data visualisation dashboards for each organisational unit visible across the organisation. We surfaced data from each unit on a rapid weekly basis and in the process reimagined, engineered and re-engineered data pipelines for extracting, transforming and loading data across systems, databases and platforms.

Surfacing hidden insights

Democratising Data

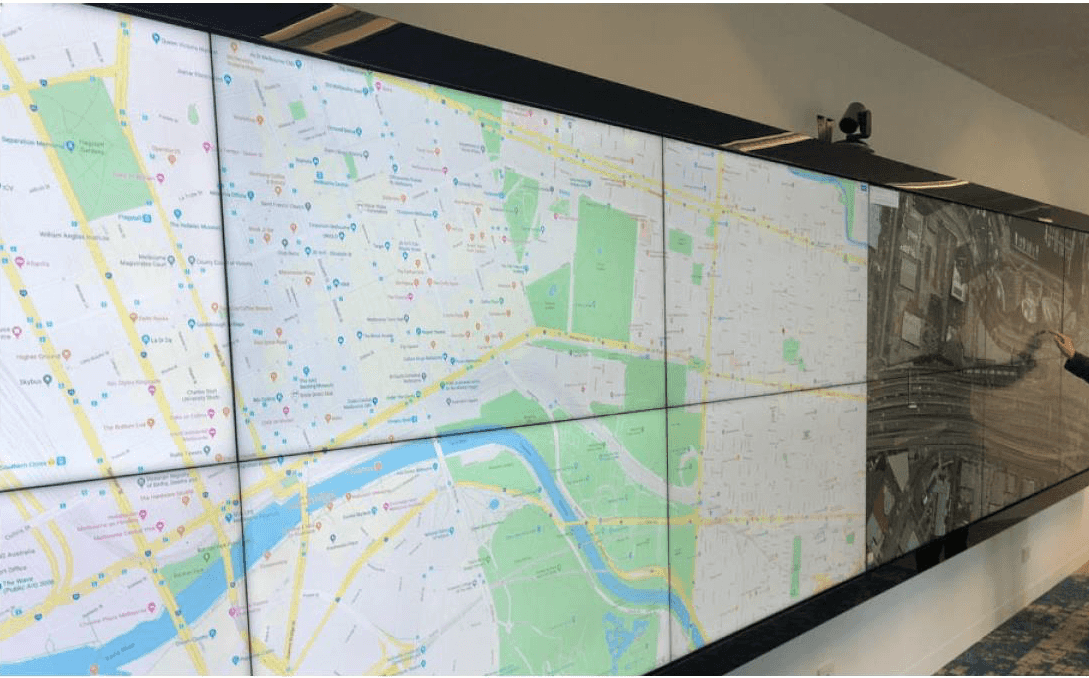

Previously unsurfaced data was readily democratised across all organisational units online via desktop and on a full width video wall.

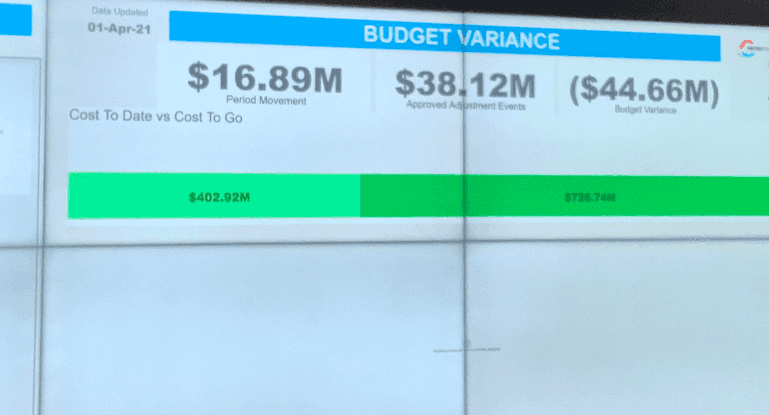

Critical information metrics were brought to business units attention on a weekly basis. This helped decision makers utilise key criteria that previously only existed in siloed business units and wasn't readily available. The process of democratising data was a key to enabling real-time critical decision making.

Time matters when getting data into the right business units. The timeliness of data affects all aspects of the project from Project and Public Safety, Environment, Quality Control, Supply Chain Inventory and Stock Management, Resourcing and Project Planning.

Surface Data TodayVisualising Key Data

Transforming data from different sources into comparable data attributes and values is a big task. Our data engineers cleanse, wrangle and transform data into end user needs extracting it out of many systems into one location.

Further steps are then taken to translate data into a visual format used to interpret and predict decisions.

Want to understand and visualise your data?

Chat to us today

Visible and Interoperable Data Environment

We engineer data pipelines to programmatically run data extractions, tranforms and loads so that the data is in the format the end user desires

Each data pipeline is custom designed because the data platforms that house this data are not homogenous. Therefore, the engineering and platforms designed to house the end-user data must be interoperable and flexible to account for the existing and new changing data structures.

Enterprises, government sectors, organisational units, small medium enterprises and even small businesses face many of the same issues today. On top of this, considerations around data governance, security, quality and transparency are top of mind.

Learn How Data Can Transform Your Organisation